The Engineering Behind LoadMagic

Performance engineering has a preparation problem. Building reliable, correlation-complete scripts from real user flows is the hardest, most time-consuming part of the discipline. We built the engineering to solve it.

The Correlation Challenge

Dynamic value correlation is the hardest problem in performance engineering. When a browser records a user session, hundreds of values — tokens, session IDs, CSRF parameters, timestamps — are captured as static snapshots. In a real load test, these values must be dynamically extracted and injected, or the script breaks silently.

Most tools pick one approach and apply it everywhere. Rules engines use templates. General-purpose AI throws a large language model at every value. Deterministic scanners rely entirely on pattern matching. Each works well in its own territory — and fails outside it.

Best Tool, Best Moment

LoadMagic's approach spans the full correlation spectrum — from deterministic pattern matching through rules-based detection, algorithmic heuristics, and AI-driven analysis. Rather than committing to a single method, we apply each approach where it performs best within the overall workflow.

A session token that follows a well-known pattern doesn't need an AI agent — deterministic extraction handles it in microseconds with certainty. A novel authentication flow with an unfamiliar token lifecycle does need intelligence — but only after the deterministic layers have already mapped everything they can. An edge case that has been seen and solved before doesn't need either — it needs memory.

This separation of concerns is deliberate. By reserving AI for the moments that genuinely require judgment, and using faster, cheaper, more predictable methods everywhere else, the platform stays efficient at every scale. Less inference means lower cost, faster execution, and fewer opportunities for hallucination.

The World View

At the centre of the architecture sits the World View — a living, structured representation of everything the platform knows about the application under test. Every dynamic value, every origin, every propagation path, every decision that has been validated or rejected.

The World View is what makes best-tool-best-moment possible. Each layer in the pipeline receives exactly the data it needs, at exactly the quality it needs, at exactly the moment it needs it. Deterministic scanners feed observations in. AI agents read curated context out. Validation results flow back to confirm or correct. The data compounds with every session, every test run, every repair.

This is also what opens the path to smaller, more specialised models. When the data reaching the intelligence layer is already filtered, structured, and enriched by everything that came before it, the reasoning task becomes narrower. A purpose-built model that deeply understands regex extraction, or Groovy scripting, or correlation strategy doesn't need to be large — it needs to be precise, and it needs the right data. The World View provides that foundation.

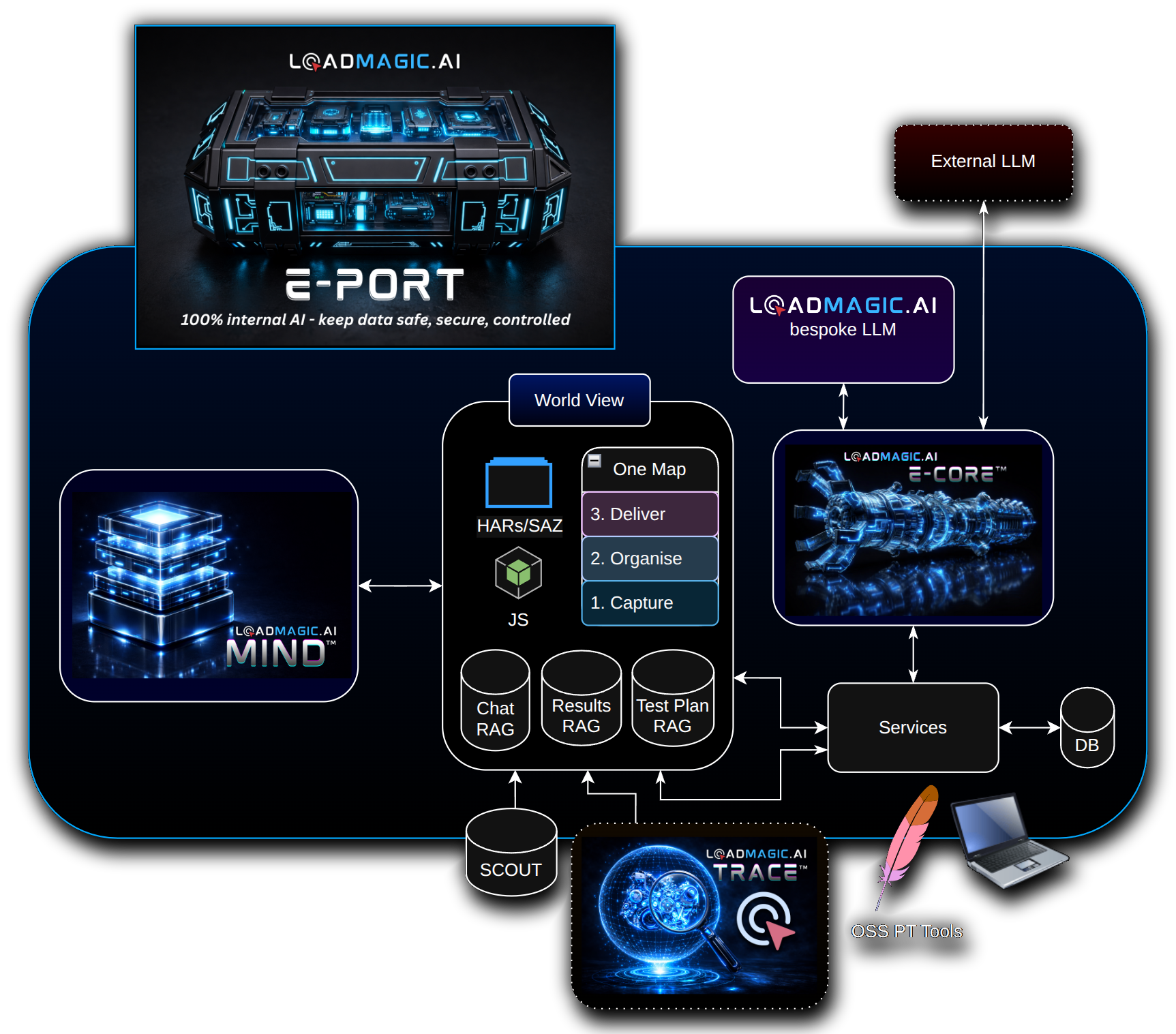

Platform Architecture

Five purpose-built systems work together to observe, decide, prove, and learn. Each does one job well and trusts the others to do theirs.

Why three layers matter: Observation, Judgment, and Proof →

AI platforms typically lock customers into specific inference providers. GPU availability varies across environments. Enterprises need control over where and how AI inference runs — especially when operating in sovereign or restricted infrastructure.

E-CORE is LoadMagic's model-agnostic reasoning layer. It abstracts inference across cloud APIs, on-premises GPU infrastructure, and CPU-based fallback — without changing application logic. The platform adapts to whatever inference backend is available in the target environment, from AWS Bedrock to local Ollama instances.

Large language models don't learn from experience. Once trained, they repeat the same mistakes regardless of how many times they've encountered similar problems. Meanwhile, the applications under test evolve constantly — new authentication flows, changing token lifecycles, shifting API patterns. Every engagement starts from zero.

M.I.N.D. is LoadMagic's persistent learning system. It maintains confidence-weighted observations about the applications it analyses — which patterns work, which approaches fail, which behaviours are common across similar systems. Validated knowledge earns trust over time; unverified observations naturally decay. Insights that prove reliable are promoted, building a compounding knowledge base that improves with every session.

When a test fails, engineers see a red result but not why. Did the extractor miss? Did the application change? Was the value in a different location? Without runtime visibility into what happened during execution, debugging correlation failures is guesswork.

TRACE captures what happens during every test execution — which extractors fired, what values they captured, where substitutions were made, and where things went wrong. It creates a chronological record of the correlation pipeline at runtime, turning opaque failures into diagnosable events with clear resolution paths.

After correlation, how do you know if the script is production-ready? Manual review of every extractor, every request, every dynamic value is impractical at scale. Teams ship scripts without confidence in their completeness.

SCOUT analyses the completed script against the original recording and produces a structured quality report — coverage grades, risk flags, and specific recommendations. It answers "is this script ready?" with evidence, not hope. When something needs attention, SCOUT tells you exactly what and where. Try SCOUT free →

Regulated industries, data sovereignty requirements, and air-gapped environments mean many AI-powered tools simply cannot operate where the work needs to happen. Teams are forced to choose between AI capability and compliance.

E-PORT is LoadMagic's portability framework. A single codebase deploys as a managed SaaS service, inside customer VPCs, or in fully air-gapped environments with no mandatory data egress. Enterprises maintain full control over where their data lives and how it's processed, without sacrificing AI-powered capabilities.

Design Principles

These are structural commitments, not aspirations. They shape every architectural decision we make.

Want to learn more about our approach?

Get in Touch